Artificial intelligence has fundamentally reshaped the landscape of higher education, but the technology’s ultimate value hinges on something far more foundational than any specific model: digital literacy. As AI becomes deeply embedded in research, writing, and instructional workflows, the ability to evaluate and challenge AI-generated content is now a requirement for institutional responsibility and academic success.

The Challenge of Conversational Polish

In recent years, students and faculty alike have adopted AI for everything from conceptual tutoring to LMS-integrated productivity tools. However, capability alone is not a solutio; understanding is.

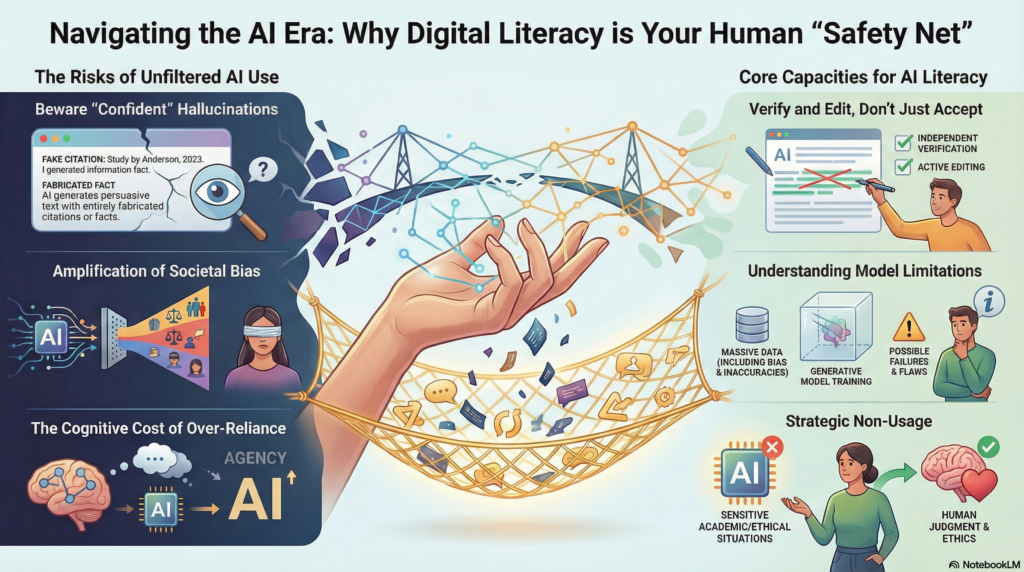

AI systems often produce fluent, confident text even when the information is fabricated. These hallucinations can appear as authoritative summaries or plausible citations that dissolve only under scrutiny. Without strong digital literacy, users may:

- Accept outputs at face value, unaware of ethical risks.

- Misinterpret conversational polish as evidence of consciousness or intent.

- Develop emotional over-reliance on the technology.

- Weaken critical thinking skills by outsourcing too much cognitive effort.

The NPR quiz below invites you to step into the role of Rick Deckard from Blade Runner:

Can you spot AI videos from real ones? Take our quiz : NPR

Intersection with Inclusive Design

Addressing these gaps requires more than a how-to guide for tools; it requires a framework that ensures all learners can engage with AI safely. This is where digital literacy meets inclusive design.

As noted in our friend’s Chris Kelly’s HigherEdAV.com article, Universal Design for Learning, inclusive environments must go beyond checkboxes to intentionally reduce barriers and promote autonomy. By providing structured support and transparent expectations, institutions can build learner agency, a value that mirrors the core principles of UDL. Coupling UDL with digital literacy allows educators to toe the line of using AI or barring it in the classroom.

Defining AI Literacy Capacities

Digital literacy in this new era involves several interconnected capacities that should be treated as an academic core rather than an optional workshop:

- Understanding how generative models are trained and why they fail.

- Recognizing hallucinations, inherent societal biases, and missing context.

- Verifying information independently and responsibly.

- Editing, rather than simply accepting, AI-generated text.

- Knowing when not to use AI, particularly in sensitive or ethical situations.

A Shared Institutional Responsibility

Digital literacy is no longer a niche skill for a few early adopters; it is a shared responsibility across AV/IT teams, teaching and learning centers, and academic leadership. As AI becomes a ubiquitous force on campus, the need for human oversight and responsible design grows more urgent.

In the next column, we will examine the rise of AI detectors, the legal risks they create, and why relying on detection may ultimately undermine academic integrity.