Most of us move through life with a quiet assumption running in the background. We assume that our minds are basically telling us the truth. Maybe not perfect truth, but close enough. We remember what happened. We know why we did what we did. We understand our motives. We can explain ourselves to ourselves.

And that makes sense, but research is showing us that we are actually pretty unreliable narrators to ourselves.

The mind does not simply record reality and hand it back to us untouched. It interprets. It edits. It fills in gaps. It protects identity. It tells stories that feel coherent, even when coherence comes at the expense of accuracy.

We want the brain to function like a camera or maybe a courtroom transcript, faithfully documenting what happened and why. Instead, a lot of research points towards something closer to a screenwriter under pressure, trying to make the plot hold together, trying to keep the lead character sympathetic, trying to make the whole thing make sense after the fact.

… just imagine the scene from My Cousin Vinny with the grits guy here…

And of course the lead character is ourselves.

A quick disclaimer, I’m going to reference and link to studies and writings by a bunch of people. I’m not an expert in this field but I share what has made sense to me in hopes that it might make sense to you.

One of the things that grabbed me first was how often people appear to act for reasons they do not consciously understand, then confidently explain those actions as if they had full access all along. In some old school studies by Richard Nisbett and Timothy Wilson, people were influenced by factors they did not notice, then supplied perfectly plausible explanations for their choices anyway. The explanation sounded thoughtful, but that doesn’t make it true.

We tend to believe that if we are honest or introspective enough, we can simply look inward and identify the real cause of our beliefs, choices, and reactions. But that’s not what the scientific method is showing us about ourselves. Honest introspection is not always a direct line to truth. On its own, it’s more like a polished after action report written by a spokesperson who arrived late to the briefing.

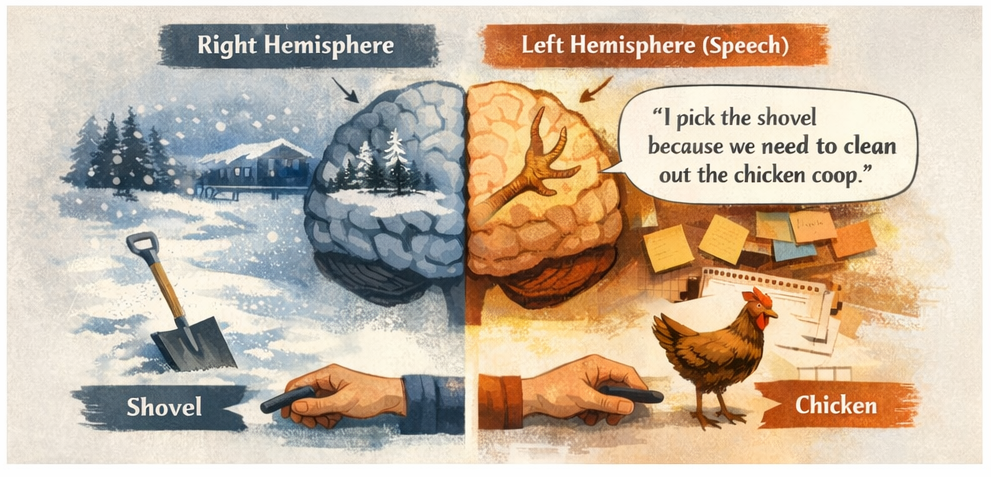

Next lets look at the split-brain research associated with Michael Gazzaniga. In one famous experiment, the participants were people whose brain hemispheres had been severed from each other as a treatment for severe seizures, meaning the two sides could no longer communicate with each other. Different information was shown to the left and right hemispheres of the brain which control the right and left sides of the body. The participant’s hands responded in ways that made sense based on what each hemisphere had seen. Here’s the thing, language is almost all housed in the left hemisphere of the brain. But when asked to explain the actions of the right hemisphere, that part of the brain did not say, “I do not know.” It generated a story. A confident one. A story that stitched the behavior into something that sounded reasonable and whole.

https://academic.oup.com/brain/article/140/7/2051/3892700

https://people.psych.ucsb.edu/gazzaniga/PDF/The%20Split%20Brain%20in%20Man.pdf

A human brain wants to portray that it’s on top of everything. It does not like uncertainty. It does not like saying, “I am not sure why I did that.” So,it often reaches for a story that protects continuity, identity, and emotional stability.

In other words, the mind isn’t only a perceiver… its a publicist.

There is a version of this that shows up in everyday life all the time. We miss a deadline and tell ourselves we were overcommitted, not avoidant. A project fails and we quietly rewrite the causes, so our own blind spots stay out of frame. An argument goes sideways and, in our private retelling, we somehow keep ending up as the most reasonable person in the room. None of this requires bad character. It’s just be how the machinery works.

Leon Festinger’s work on cognitive dissonance pushed this even further. When reality collides with a deeply held belief, people do not always revise the belief in clean, rational ways. Sometimes they double down, reinterpret the evidence and build a new explanation sturdy enough to preserve the old self.

The famous case involving Dorothy Martin and her apocalyptic group remains one of the most revealing examples. When the prophecy of global doom they believed in failed, the faithful did not simply abandon it. Instead, a new explanation emerged to resolve the unbearable tension. The world had been spared because of their faithfulness. A shattered prediction was converted into confirmation.

It is tempting to hear a story like that and locate it far away or to file it under cult behavior, extremism, or fringe irrationality. But I do not think that is the wisest reading. The more uncomfortable takeaway is that the same basic mechanism is available to all of us in more socially acceptable forms. We all have stories we protect because admitting the full truth would cost us something. It could be pride, identity, belonging, respect or even the feeling that our lives have made sense.

That does not excuse self-deception, but it may explain why it is so durable.

If the mind is this selective, this willing to trade some accuracy for emotional or social stability, why would evolution have selected for that? Wouldn’t a more objective mind have a clear advantage?

Here again, the research points in a more complicated direction. Thinkers like Daniel Dennett and Ryan McKay have written about misbelief not simply as malfunction, but as what they call a “forgivable design limitation.” So… the mind may not be failing at its job, it may be doing the job it was shaped to do.

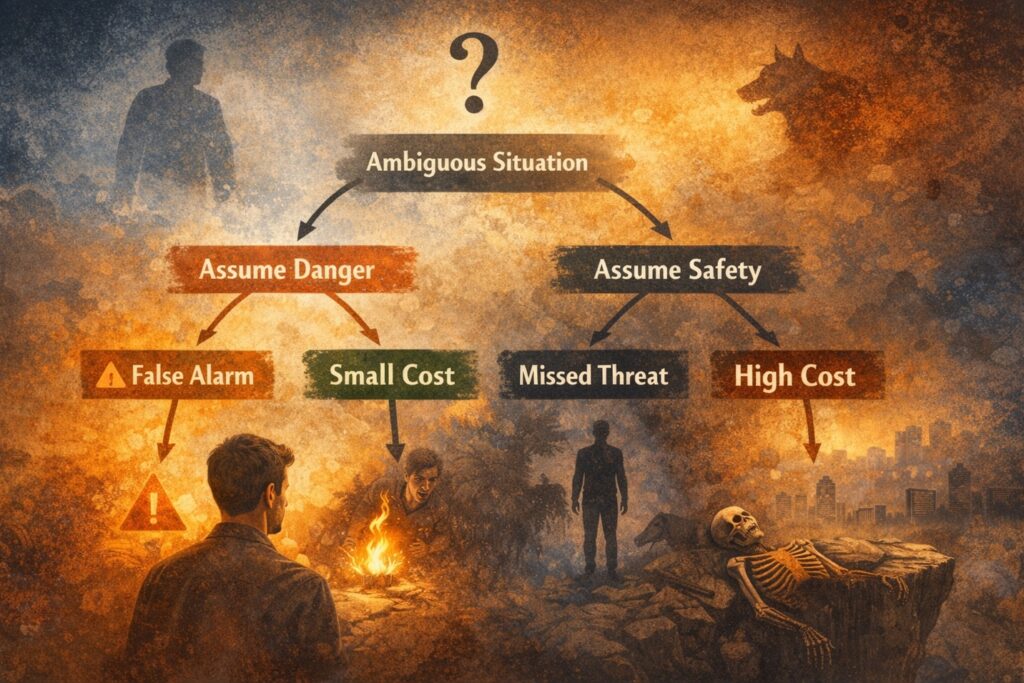

Error management theory helps explain this. In many situations, especially under uncertainty, the cost of one kind of mistake is much higher than the cost of another. If early humans occasionally mistook something harmless for something dangerous, they paid a manageable price. If they repeatedly mistook danger for safety, the price could be fatal. Better to be jumpy and alive than calm and dead.

https://labs.la.utexas.edu/buss/files/2015/09/Error_Management_Theory_2000.pdf

That logic is still very much with us. It travels into social life, status, attachment, belonging, and the stories we tell about ourselves. And once you start looking for it, you can see how often the human mind seems to prefer a survivable distortion over a devastating truth.

That is where this whole line of thought could become cynical if we let it. If our minds are interpreters, editors, and protectors rather than neutral observers, then what are we supposed to do with that? Just shrug and accept that we are all trapped inside our own narratives?

The hard truth is that, in isolation we very much can be trapped in a self-sustaining feedback loop. If we are each individually an unreliable narrator then we need other views and perspectives to assemble a full picture.

From there we look at the work of John Teske. As I understand it, Trask pushes against the idea that the mind is best understood as a sealed off internal computer. He points toward a more distributed picture of human knowing, one shaped by embodiment, environment, tools, and relationships. Thinking is not just something that happens inside the skull. It is entangled with the body, habits, social life and with the structures around us.

https://philpapers.org/rec/TESFET

What this brings to the surface is the limits of self introspection. If the mind is already prone to self-protective storytelling, then the answer is probably not to lock ourselves in a room and think harder until pure truth appears. That may just give the internal narrator more time at the microphone.

Sometimes the reality check has to come from outside us.

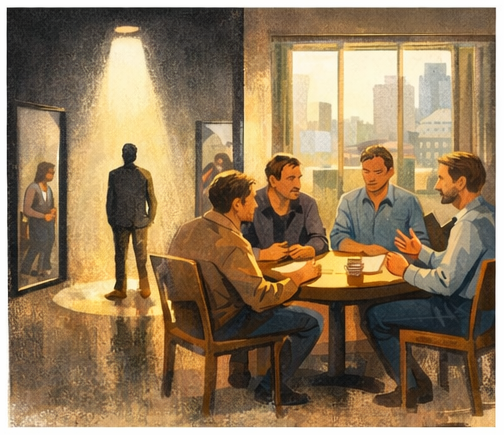

That is where trusted relationships start to look less optional. Not just emotionally valuable but epistemically valuable. Useful not only because they comfort us, but because they correct us. They can become one of the ways we stay in contact with reality when our own internal storytelling starts drifting. A good friend does this. A strong partner does this. A healthy team does this. A real community does this.

They do not merely echo back the version of us we most want to hear. They’re up for interrupting it. They’ll ask us the harder question, they remember what we left out, they notice the pattern, they challenge the self-serving interpretation. They tell us, kindly or not so kindly, that the story we are telling may not be the whole story.

That kind of correction is not comfortable. But maybe that’s the point. If the private mind naturally seeks coherence, image protection, and survivable narrative, then people who love us well are not just companions, they are stabilizers. They are sometimes the difference between self-reflection and self-mythologizing.

I think that has implications far beyond personal relationships. It informs leadership. It guides workplaces. It anchors families. It is imperative communities committed to telling the truth about themselves.

A leader without honest relationships can become dangerous very quickly, not only because of power, but because power reduces correction. The more insulated they are, the easier it becomes to confuse their internal narrative with reality.

Sounds familiar, doesn’t it?

The same thing can happen to teams, departments, even whole institutions. We can begin telling flattering stories about why things are hard, why outcomes are disappointing, why tensions exist, why other people are the problem, why we are already doing enough. And if no one is close enough or honest enough to challenge those stories, they harden.

That is one reason why genuine and authentic community matters in a deeper sense than we sometimes admit. Some communities reinforce distortion or reward performance over honesty or protect group myths at all costs. But healthy community, the kind worth building, gives us something more demanding than affirmation. It gives us calibration.

Authentic community opens up the pathway to be real. Not in the shallow sense of being blunt or unfiltered. In the deeper sense of remaining connected to what is true, even when the truth is inconvenient and undermines the story we would rather tell. It is there to reveal that our motives were mixed, our memory was selective, or our certainty was overstated.

If that sounds like a high bar, it is. Which is why this article doesn’t finish with a tidy prescription. I’m not an expert announcing settled conclusions. I am writing as someone trying to understand what this research might mean for day in and day out human life.

And what it seems to mean, at least to me, is this: if our minds are as skilled at interpretation and protection as this work suggests, then one of the greatest gifts in life may be people who help us see more clearly than we can see alone.

People who can say, with care, that is not how it happened.

People who can hold the mirror steady.

People who do not just support our preferred identity but help refine it.

People who remind us that being known is more important than being right.