AI detection software works by scanning text for linguistic patterns, such as uniform sentence structures and predictable phrasing, that are typically associated with large language models. While these tools were rushed into classrooms to preserve academic integrity, they are increasingly under fire for their lack of accuracy and the ethical risks they pose to the student-teacher relationship.

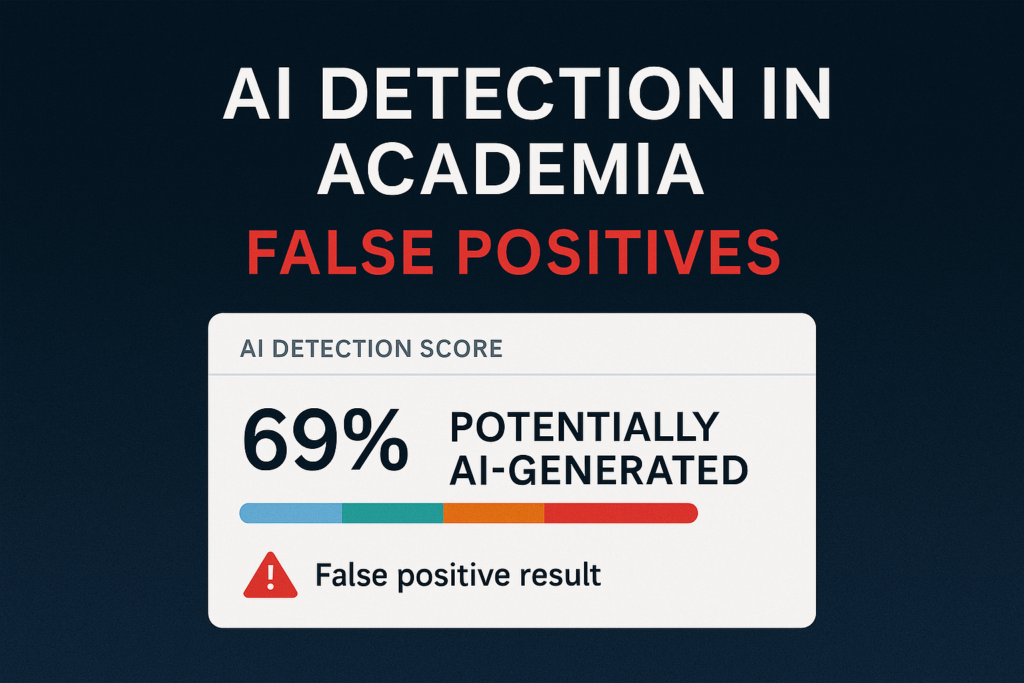

The Hidden Cost of False Positives

The most significant technical hurdle for these tools is the high rate of false positives. AI detectors frequently flag genuine student work as machine-generated, particularly when the writer:

- Is a multilingual learner or uses a concise, structured writing style.

- Utilizes grammar-assistance tools like Grammarly or Superhuman for clarity and tone adjustment.

- Relies on digital resources for accessibility or disability support.

According to research from the San Diego Legal Center, the improper use of these detectors fosters a culture of distrust and can lead to unfair disciplinary actions.

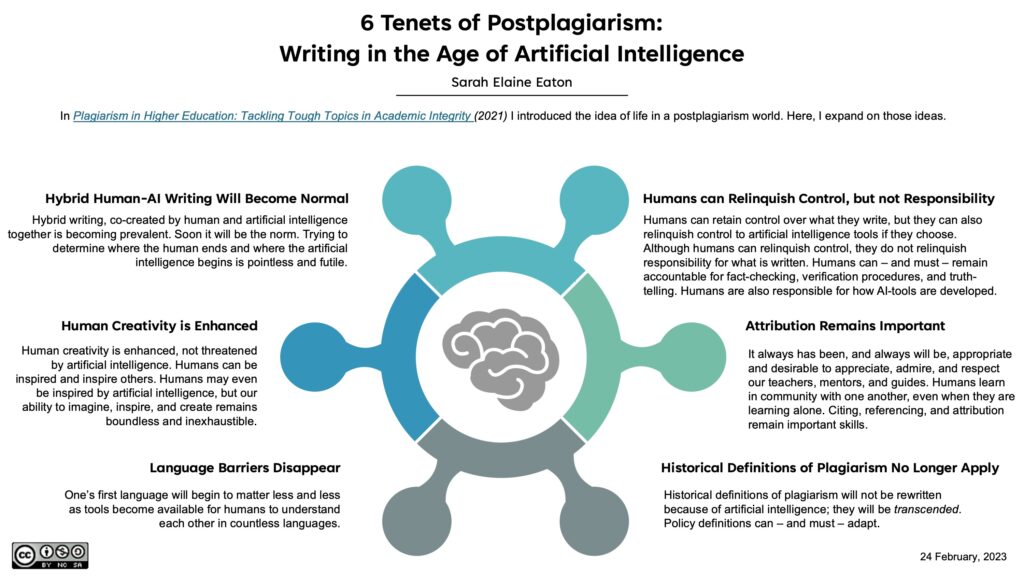

Emerging research has proposed a framework of hybrid writing , also known as the 6 Tenets of Writing. In the framework, AI is positioned as a collaborative tool that can support writing processes while maintaining human accountability for authorship, attribution, and intellectual responsibility. Hybrid writing practices are described as reducing language barriers and challenging traditional notions of plagiarism, if users remain responsible for evaluating, editing, and ethically integrating AI‑generated content into their work.

The perspective in the 6 Tenets reinforces the importance of framing AI as a human‑in‑the‑loop tool rather than an autonomous replacement for scholarly labor. The methodology of these practices is encouraged to be taught to increase the digital literacy of students. The figure below displays how educators can refrain from detector tools and incorporate hybrid writing.

Schools are dropping AI detection software as it garners false positives, creating frustration for both students and faculty.

Legal and Ethical Risks

When institutions treat a detection score as authoritative evidence, they enter a legal minefield. Many vendors acknowledge their tools cannot guarantee 100% accuracy, yet these scores often become the central evidence in misconduct cases.

This lack of transparency makes due process difficult to defend, leading to:

- Formal grievances and lawsuits from students challenging faulty reports.

- A “chilling effect” where students avoid legitimate learning technologies out of fear of being flagged.

- An erosion of trust as faculty become suspicious of any polished work.

From Detection to Design

As we discussed in our previous look at inclusive design, focusing solely on policing addresses symptoms rather than causes. As noted in Chris Kelly’s article on Universal Design for Learning, inclusive environments must prioritize autonomy and reduce barriers.

Instead of an “arms race” between detectors and generative tools—which detection algorithms are likely to lose as AI becomes more personalized—institutions should shift toward authentic assessment.

The following video explains how students can use hybrid writing techniques, such as using reference managers like Zotero, to document research practices. These methods allow students to prove they have accomplished their own work.

Methods for AI in classrooms

| Topic Area | Primary Challenges | Key Benefits or Use Cases | Recommended Institutional Strategy | Impact on Academic Integrity | Inferred Risks |

| AI Detection & Academic Integrity | High rate of false positives, disproportionate penalization of multilingual or disabled learners, and lack of transparency in detector algorithms. | Identifying machine-generated prose to maintain standards. | Shift from ‘detection to design’ by prioritizing transparent AI policies, authentic assessments, and faculty development for assessment adaptation. | Erosion of trust between students and faculty; chilling effect where students avoid legitimate tools due to fear of being flagged. | Legal liability for institutions due to due process violations and long-term degradation of student-teacher relationships into an adversarial dynamic. |

| Digital Literacy | AI hallucinations (fabricated citations), emotional over-reliance, weakening of critical thinking, and bias in outputs. | Writing refinement, language practice, conceptual tutoring, and streamlining of instructional workflows. | Treat AI literacy as an academic core; embed it into general education and faculty professional development, focusing on technical and ethical aspects. | Misinterpretation of AI polish as evidence of intent or consciousness; risk of accepting inaccurate data without scrutiny. | Potential for a permanent decline in human cognitive independence and the loss of traditional research methodologies. |

| Inclusive Design & UDL | Barriers for diverse learners and the ‘accessibility checkbox’ approach to learning environments. | Reducing barriers, anticipating diverse needs, promoting learner autonomy, and fostering student agency. | Align AI literacy goals with Universal Design for Learning (UDL) to create structured support and transparent expectations. | Reduces incentives for misuse by providing clear opportunities for building agency and responsible engagement. | Potential for systemic exclusion of non-traditional learners if AI literacy is not treated as a fundamental right or skill. |

A Sustainable Path Forward

The future of academic integrity relies on human-centered strategies rather than software alone:

- Instructional Practice: Differentiating between productive AI assistance and academic misconduct.

- Assignment Design: Creating tasks that require intentional reflection, verification, and attribution.

- Digital Literacy: Training faculty and students to understand the limitations of both AI and the tools meant to catch it.

Ultimately, we must ask if the risks of detection align with the values of higher education. In our next column, we will explore how AI can actually support assessment design through better feedback, rubrics, and strategies that strengthen student skills.