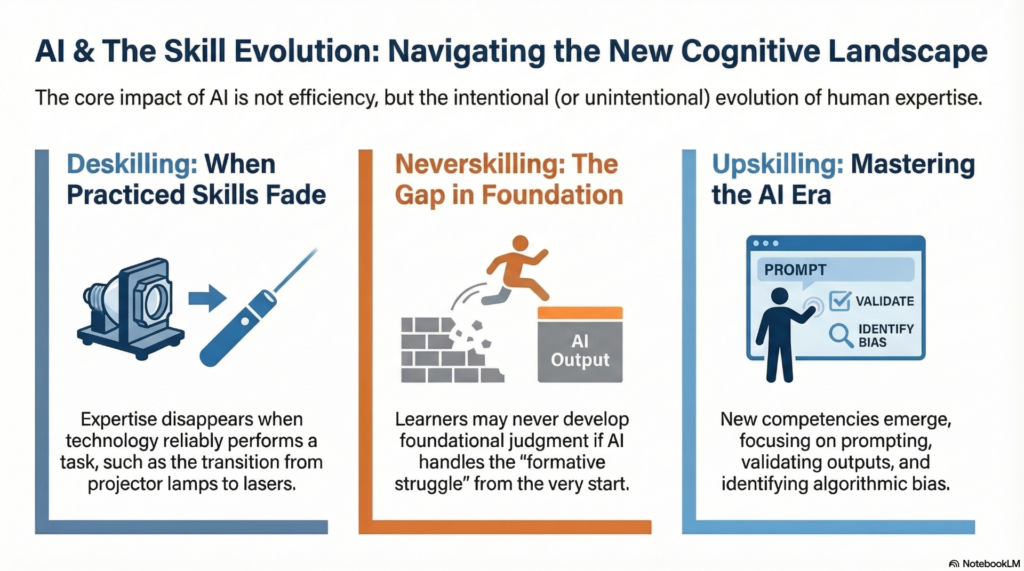

One of the most persistent questions surrounding artificial intelligence in education is not whether AI works, but what it does to human skill over time. As tools become more capable, they don’t simply speed up tasks; they reshape which skills are practiced, which are deprioritized, and which never develop at all.

I attended a recent lecture by Dr. Whalon that discussed the framework of deskilling, upskilling, and never-skilling. A webinar on the topic titled Preparing for AI Integration in Clinical Education can be found here.

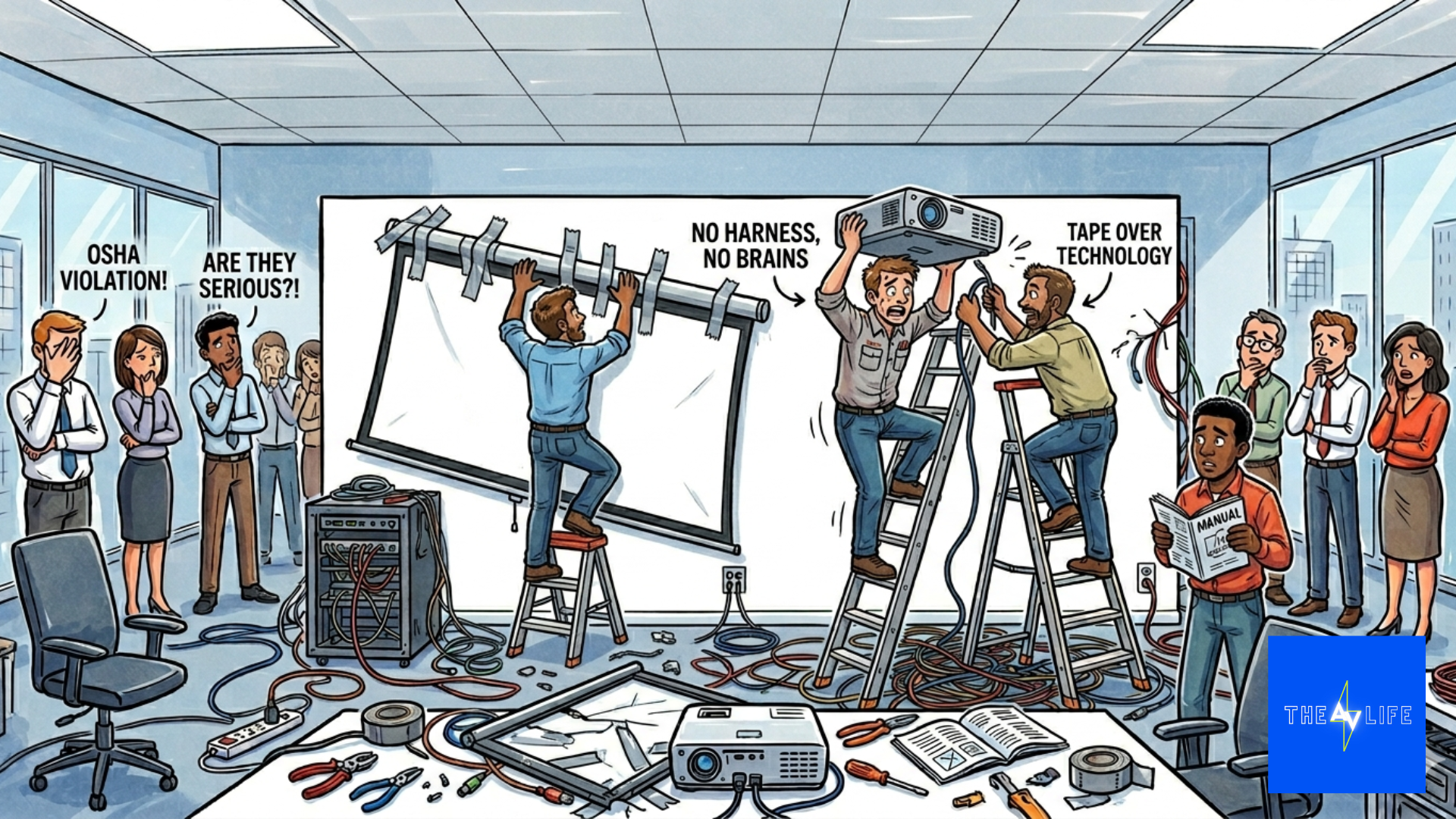

Deskilling: When a Skill Fades Because a Tool Replaces It (A Loose connection to AV)

Deskilling occurs when humans stop practicing a skill because technology reliably performs it for us. The skill may still exist, but it no longer receives attention, repetition, or cultural value.

A simple project management example illustrates this well.

For decades, audiovisual projects required technicians to know how to change projector lamps. This was not optional knowledge. Lamp life, heat, alignment, and replacement schedules were part of the professional skill set. When lasers replaced lamps, not bulbs, as my former colleague would call them 😊, that expertise did not evolve; it largely disappeared. There was no reason to train new technicians on lamp replacement because technology no longer required it.

This phenomenon is deskilling in action.

AI introduces similar dynamics in education. Tasks like drafting discussion prompts, summarizing readings, generating quiz questions, or cleaning up prose are increasingly automated. When these tasks disappear from daily practice, instructors and students alike lose repeated exposure to the thinking processes embedded in them.

Deskilling is not inherently negative. Few people argue that we should still train projectionists to replace lamps “just in case.” The danger emerges when we fail to notice which cognitive skills are being quietly retired alongside the task.

Never‑Skilling: When a Skill Is Never Learned at All

Never‑skilling goes a step further. It describes skills that future learners never acquire because the task is fully delegated to technology before they encounter it.

In medicine, this is already visible. Many clinicians no longer manually analyze samples under microscopes; machines handle the process with speed and accuracy. For routine diagnostics, this is a net positive. But it raises critical questions about what happens when edge cases arise and the underlying human expertise no longer exists in sufficient depth.

In education, AI risks creating similar gaps.

If students rely on AI to structure arguments, debug code, translate language, or generate explanations from the start of their learning journey, they may never develop foundational competencies. The concern is not that AI assists learning, but that learners skip the formative struggle that builds judgment, confidence, and self‑efficacy.

Never‑skilling is difficult to reverse because you cannot remediate a skill that was never practiced. This places significant responsibility on institutions to decide where AI is allowed to assist and where it should be deliberately constrained.

Upskilling: Learning New Skills to Work With AI

Upskilling is often the most celebrated of the three, and for good reasons. It represents learning new competencies that emerge alongside new technologies.

In the context of AI, upskilling includes:

- Learning how to write effective prompts

- Evaluating and validating AI outputs

- Identifying bias and hallucinations

- Integrating AI into workflows ethically and transparently

- Teaching students how to critique, not just consume, AI‑generated content

The difference is rarely the technology itself. It is the surrounding culture, coaching, and clarity.

Designing for Intentional Skill Futures

Deskilling, upskilling, and never‑skilling are not abstract theories. They are design choices.

Every syllabus statement, assignment structure, and policy decision communicates which skills matter and which can be delegated. When those decisions are left implicit, AI fills the gap.

The lamp‑to‑laser transition feels mundane until you realize how cleanly it maps onto AI adoption. No modern AV project requires lamp‑changing skills, not because lasers are trendy, but because the underlying problem has been solved differently.

Higher education has a history of letting tools drive pedagogy rather than the reverse. AI intensifies this risk because of its speed, accessibility, and persuasive outputs. If institutions default to convenience, they may unintentionally design learning environments that privilege efficiency over capability.

If AI is going to remain embedded in education, the most important question is not what it can do, but what we are willing to stop doing, what we are willing to relearn, and what we refuse to let disappear.

Lasers made lamp‑changing obsolete. AI does not get to decide on its own which human skills meet the same fate.